Gen AI Regulation and USAISI.pdf

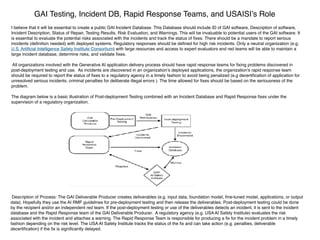

- 1. GAI Testing, Incident DB, Rapid Response Teams, and USAISI’s Role I believe that it will be essential to create a public GAI Incident Database. This Database should include ID of GAI software, Description of software, Incident Description, Status of Repair, Testing Results, Risk Evaluation, and Warnings. This will be invaluable to potential users of the GAI software. It is essential to evaluate the potential risks associated with the incidents and track the status of fi xes. There should be a mandate to report serious incidents (de fi nition needed) with deployed systems. Regulatory responses should be de fi ned for high risk incidents. Only a neutral organization (e.g. U.S. Arti fi cial Intelligence Safety Institute Consortium) with large resources and access to expert evaluators and red teams will be able to maintain a large incident database, determine risks, and validate fi xes. All organizations involved with the Generative AI application delivery process should have rapid response teams for fi xing problems discovered in post-deployment testing and use. As incidents are discovered in an organization’s deployed applications, the organization’s rapid response team should be required to report the status of fi xes to a regulatory agency in a timely fashion to avoid being penalized (e.g decerti fi cation of application for unresolved serious incidents, criminal penalties for deliberate illegal errors ). The time allowed for fi xes should be based on the seriousness of the problem. The diagram below is a basic illustration of Post-deployment Testing combined with an Incident Database and Rapid Response fi xes under the supervision of a regulatory organization. Description of Process: The GAI Deliverable Producer creates deliverables (e.g. input data, foundation model, fi ne-tuned model, applications, or output data). Hopefully they use the AI RMF guidelines for pre-deployment testing and then release the deliverables. Post-deployment testing could be done by the recipient and/or an independent red team. If the post-deployment testing or use of the deliverables detects an incident, it is sent to the incident database and the Rapid Response team of the GAI Deliverable Producer. A regulatory agency (e.g. USA AI Safety Institute) evaluates the risk associated with the incident and attaches a warning. The Rapid Response Team is responsible for producing a fi x for the incident problem in a timely fashion depending on the risk level. The USA AI Safety Institute tracks the status of the fi x and can take action (e.g. penalties, deliverable decerti fi cation) if the fi x is signi fi cantly delayed.