Software_Testing_Overview.pptx

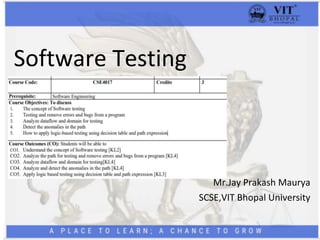

- 1. Software Testing Mr.Jay Prakash Maurya SCSE,VIT Bhopal University

- 2. Outlines • Introduction to Testing • Debugging • Purpose and goal of Testing • Dichotomies • Testing and Debugging • Model for Testing • Consequences of Bugs • Taxonomy of Bugs

- 3. Prerequisite

- 4. Testing • Testing is the process of exercising or evaluating a system or system components by manual or automated means to verify that it satisfies specified requirements. Debugging • Debugging is the process of finding and fixing errors or bugs in the source code of any software. When software does not work as expected, computer programmers study the code to determine why any errors occurred. They use debugging tools to run the software in a controlled environment, check the code step by step, and analyze and fix the issue.

- 5. • MYTH: Good programmers write code without bugs. (It’s wrong!!!) • History says that even well written programs still have 1-3 bugs per hundred statements.

- 6. Phases in a tester's mental life: • Phase 0: (Until 1956: Debugging Oriented) • Phase 1: (1957-1978: Demonstration Oriented) • Phase 2: (1979-1982: Destruction Oriented) • Phase 3: (1983-1987: Evaluation Oriented) • Phase 4: (1988-2000: Prevention Oriented)

- 7. Purpose of Testing • To identify and show program has bugs. • To show program/ software works. • To show program/software doesn’t work. Goal of Testing • Bug Prevention (Primary Goal) • Bug Discovery (Secondary) • Test Design Bug is manifested in deviation from Expected behaviour.

- 13. • Environment Model: Hardware Software (OS, linkage editor, loader, compiler, utility routines) • Program Model: In order to simplify the order to test. Complicated enough to test unexpected behavior. • Bug Hypothesis: • Benign Bug Hypothesis: bugs are nice, tame and logical • Bug Locality Hypothesis: bug discovered with in a component affects only that component's behavior • Control Bug Dominance: errors in the control structures • Code / Data Separation: bugs respect the separation of code and data • Lingua Salvatore Est: language syntax and semantics eliminate bugs • Corrections Abide: corrected bug remains corrected • Silver Bullets: Language, Design method, representation, environment grants immunity from bugs. • Sadism Suffices: Tough bugs need methodology and techniques. • Angelic Testers: testers are better at test design, Programmer for code design

- 14. Test • Tests are formal procedures, Inputs must be prepared, Outcomes should predict, tests should be documented, commands need to be executed, and results are to be observed. All these errors are subjected to error. • Unit / Component Testing: • Integration Testing: • System Testing:

- 15. Role of Models: • The art of testing consists of creating, selecting, exploring, and revising models. Our ability to go through this process depends on the number of different models we have at hand and their ability to express a program's behavior.

- 17. Task-1 [Class activity] • Focus on types of bugs in the software development process and how to handle these bugs. • https://web.cs.ucdavis.edu/~rubio/includes/ase17.pd f • Software bug prediction using object-oriented metrics (ias.ac.in)

- 18. Consequence of Bugs Damage Depends on : • Frequency • Correction Cost • Installation Cost • Consequences Importance= ($) = Frequency * (Correction cost + Installation cost + Consequential cost) Consequences of bugs: • Mild • Moderate • Annoying • Disturbing • Serious • Very Serious • Extreme • Intolerable • Catastrophic • Infectious

- 19. Software Testing Metrics • Process Metrics • Product Metrics • Project Metrics • Base Metrics • Calculated Metrics

- 20. Taxonomy of Bugs • There is no universally correct way to categorize bugs. The taxonomy is not rigid. • A given bug can be put into one or another category depending on its history and the programmer's state of mind. • The major categories are: (1) Requirements, Features, and Functionality Bugs (2) Structural Bugs (3) Data Bugs (4) Coding Bugs (5) Interface, Integration, and System Bugs (6) Test and Test Design Bugs.

- 21. Requirements and Specifications Bugs: • Requirements and specifications developed from them can be incomplete ambiguous, or self-contradictory. They can be misunderstood or impossible to understand. • The specifications that don't have flaws in them may change while the design is in progress. The features are added, modified and deleted. • Requirements, especially, as expressed in specifications are a major source of expensive bugs. • The range is from a few percentages to more than 50%, depending on the application 10 and environment. • What hurts most about the bugs is that they are the earliest to invade the system and the last to leave.

- 22. Feature Bugs: • Specification problems usually create corresponding feature problems. • A feature can be wrong, missing, or superfluous (serving no useful purpose). A missing feature or case is easier to detect and correct. A wrong feature could have deep design implications. • Removing the features might complicate the software, consume more resources, and foster more bugs.

- 23. Feature Interaction Bugs: • Providing correct, clear, implementable and testable feature specifications is not enough. • Features usually come in groups or related features. The features of each group and the interaction of features within the group are usually well tested. • The problem is unpredictable interactions between feature groups or even between individual features. For example, your telephone is provided with call holding and call forwarding. The interactions between these two features may have bugs. • Every application has its peculiar set of features and a much bigger set of unspecified feature interaction potentials and therefore result in feature interaction bugs

- 24. Control and Sequence Bugs: • Control and sequence bugs include paths left out, unreachable code, improper nesting of loops, loop-back or loop termination criteria incorrect, missing process steps, duplicated processing, unnecessary processing, rampaging, GOTO's, ill-conceived (not properly planned) switches, spaghetti code, and worst of all, pachinko code. • One reason for control flow bugs is that this area is amenable (supportive) to theoretical treatment. • Most of the control flow bugs are easily tested and caught in unit testing

- 25. Logic Bugs: • Bugs in logic, especially those related to misunderstanding how case statements and logic operators behave singly and combinations • Also includes evaluation of boolean expressions in deeply nested IF-THEN-ELSE constructs. • If the bugs are parts of logical (i.e. boolean) processing not related to control flow, they are characterized as processing bugs. • If the bugs are parts of a logical expression (i.e. control- flow statement) which is used to direct the control flow, then they are categorized as control-flow bugs.

- 26. Processing Bugs: • Processing bugs include arithmetic bugs, algebraic, mathematical function evaluation, algorithm selection and general processing. • Examples of Processing bugs include: Incorrect conversion from one data representation to other, ignoring overflow, improper use of greater-than-or- equal etc • Although these bugs are frequent (12%), they tend to be caught in good unit testing.

- 27. Initialization Bugs: • Initialization bugs are common. Initialization bugs can be improper and superfluous. • Superfluous bugs are generally less harmful but can affect performance. • Typical initialization bugs include: Forgetting to initialize the variables before first use, assuming that they are initialized elsewhere, initializing to the wrong format, representation or type etc • Explicit declaration of all variables, as in Pascal, can reduce some initialization problems.

- 28. Data-Flow Bugs and Anomalies: • Most initialization bugs are special case of data flow anomalies. • A data flow anomaly occurs where there is a path along which we expect to do something unreasonable with data, such as using an uninitialized variable, attempting to use a variable before it exists, modifying and then not storing or using the result, or initializing twice without an intermediate use

- 29. Data Bugs • Data bugs include all bugs that arise from the specification of data objects, their formats, the number of such objects, and their initial values. • Data Bugs are at least as common as bugs in code, but they are often treated as if they did not exist at all. • Code migrates data: Software is evolving towards programs in which more and more of the control and processing functions are stored in tables. • Because of this, there is an increasing awareness that bugs in code are only half the battle and the data problems should be given equal attention.

- 30. Coding bugs: • Coding errors of all kinds can create any of the other kind of bugs. • Syntax errors are generally not important in the scheme of things if the source language translator has adequate syntax checking. • If a program has many syntax errors, then we should expect many logic and coding bugs. • The documentation bugs are also considered as coding bugs which may mislead the maintenance programmers

- 31. Interface, integration, and system bugs: • External Interface • Internal Interface • Hardware Architecture • O/S Bug • Software Architecture • Control and sequence bugs • Resourse management Problems • Integration bugs • System bugs

- 32. TEST AND TEST DESIGN BUGS: • Testing: testers have no immunity to bugs. Tests require complicated scenarios and databases. • They require code or the equivalent to execute and consequently they can have bugs. • Test criteria: if the specification is correct, it is correctly interpreted and implemented, and a proper test has been designed;

- 33. Test Metrics Generation of Software Test Metrics is the most important responsibility of the Software Test Lead/Manager. Test Metrics are used to, 1.Take the decision for the next phase of activities such as, estimate the cost & schedule of future projects. 2.Understand the kind of improvement required to success the project 3.Take a decision on the Process or Technology to be modified etc.

- 34. Example of Test Report • How many test cases have been designed per requirement? • How many test cases are yet to design? • How many test cases are executed? • How many test cases are passed/failed/blocked? • How many test cases are not yet executed? • How many defects are identified & what is the severity of those defects? • How many test cases are failed due to one particular defect? etc.

- 35. • Example of Software Test Metrics Calculation S No. Testing Metric Data retrieved during test case development 1 No. of requirements 5 2 The average number of test cases written per requirement 40 3 Total no. of Test cases written for all requirements 200 4 Total no. of Test cases executed 164 5 No. of Test cases passed 100 6 No. of Test cases failed 60 7 No. of Test cases blocked 4 8 No. of Test cases unexecuted 36 9 Total no. of defects identified 20 10 Defects accepted as valid by the dev team 15 11 Defects deferred for future releases 5 12 Defects fixed 12 Percentage test cases execu Test Case Effectiveness Failed Test Cases Percentag Blocked Test Cases Percenta Fixed Defects Percentage Accepted Defects Percentage Defects Deferred Percentage

- 36. 1. Percentage test cases executed = (No of test cases executed / Total no of test cases written) x 100 = (164 / 200) x 100 = 82 2. Test Case Effectiveness = (Number of defects detected / Number of test cases run) x 100 = (20 / 164) x 100 = 12.2 3. Failed Test Cases Percentage = (Total number of failed test cases / Total number of tests executed) x 100 = (60 / 164) * 100 = 36.59 4. Blocked Test Cases Percentage = (Total number of blocked tests / Total number of tests executed) x 100 = (4 / 164) * 100 = 2.44 5. Fixed Defects Percentage = (Total number of flaws fixed / Number of defects reported) x 100 = (12 / 20) * 100 = 60 6. Accepted Defects Percentage = (Defects Accepted as Valid by Dev Team / Total Defects Reported) x 100 = (15 / 20) * 100 = 75 7. Defects Deferred Percentage = (Defects deferred for future releases / Total Defects Reported) x 100 = (5 / 20) * 100 = 25

- 39. How to estimate? Software Testing Estimation Techniques • Work Breakdown Structure • 3-Point Software Testing Estimation Technique • Wideband Delphi technique • Function Point/Testing Point Analysis • Use – Case Point Method • Percentage distribution • Ad-hoc method

- 40. WBS

- 41. • Divide the whole project task into subtasks • Allocate each task to team member • Effort Estimation For Tasks • Functional Point Method • Three Point Estimation

- 42. Function Point Method • Total Effort: The effort to completely test all the functions. • Total Function Points: Total modules. • Estimate defined per Function Points: The average effort to complete one function points. This value depends on the productivity of the member who will take in charge this task.

- 43. Total Effort and cost. Weightage # of Function Points Total Complex 5 3 15 Medium 3 5 15 Simple 1 4 4 Function Total Points 34 Estimate define per point 5 Total Estimated Effort (Person Hours) 170 Estimate the cost for the tasks: Suppose, on average your team salary is $5 per hour. The time required for “Create Test Specs” task is 170 hours. Accordingly, the cost for the task is 5*170= $850.

- 44. Three Point Estimation • Test Manager needs to provide three values, as specified above. The three values identified, estimate what happens in an optimal state, what is the most likely, or what we think it would be the worst case scenario. parameter E is known as Weighted Average. It is the estimation of the task “Create the test specification”. A possible and not a certain value, we must know about the probability that the estimation is correct.

- 45. Thanks